OpenClaw/Moltbot Security: Analysis and Risk Mitigation for Agentic AI

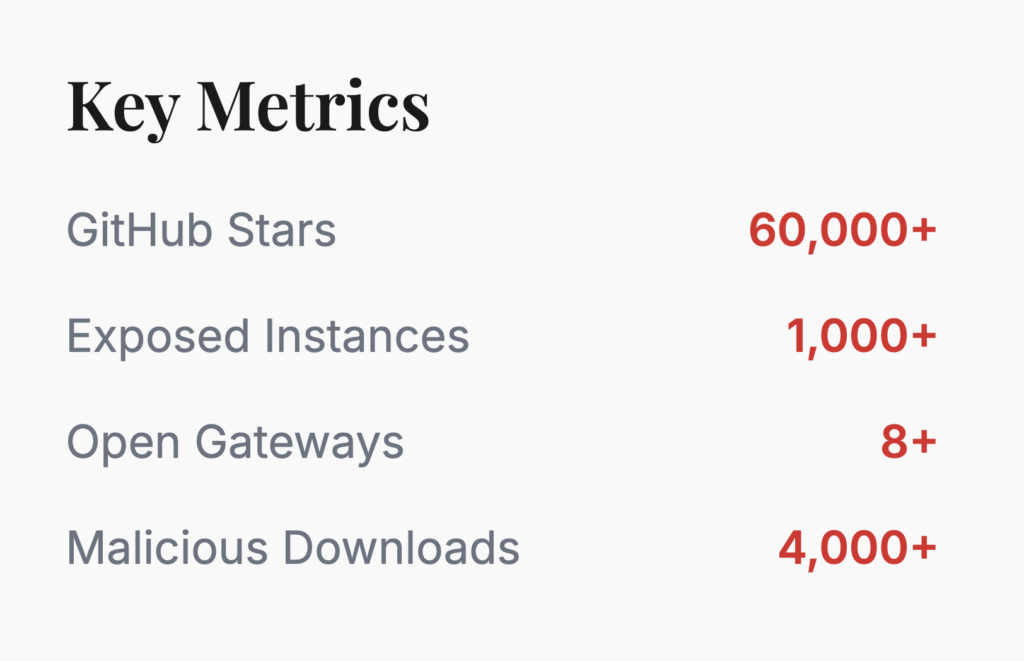

OpenClaw (Prev Moltbot/Clawdbot) represents a paradigm shift in personal AI assistance, functioning as a locally-hosted, autonomous agent with system-level execution capabilities. Originally launched as Clawdbot before rebranding due to trademark concerns, Moltbot got over 60,000 GitHub stars within weeks of release, driven by its promise of a “Personal AI Assistant” that bridges communication with hardware control.

Table of Contents

Moltbot (Formerly Clawdbot) Architecture and Capabilities

Unlike traditional cloud-based chatbots operating within sandboxed environments, Moltbot implements a three-tier distributed architecture comprising the Gateway (central control plane), Execution Nodes (device-specific agents), and Communication Channels (adapters for messaging platforms).

The Moltbot Gateway operates as a long-running Node.js process typically bound to port 18789, managing WebSocket connections, HTTP APIs, and authentication while routing commands between users and AI models.

Execution Nodes provide direct access to host machine resources, enabling capabilities that include arbitrary shell command execution (system.run), unrestricted file system access, browser automation via Chrome DevTools Protocol (CDP), and integration with messaging platforms including WhatsApp, Telegram, Discord, Slack, Signal, and iMessage.

This architecture allows the AI to perform proactive tasks such as monitoring directories, executing scheduled cron jobs, screening communications, and automating complex multi-step workflows across local and remote systems.

Local-First Design vs. Cloud Security Models

The local-first architecture fundamentally inverts traditional cloud security paradigms.

Moltbot transfers the entire security burden to the end user, creating a deployment model where data sovereignty comes at the cost of security complexity.

The system stores sensitive credentials, API keys, and persistent memory (conversation histories, user preferences) in plaintext JSON and Markdown files within the ~/.moltbot directory, protected only by filesystem permissions.

Moltbot requires users to function as their own security administrators, managing Node.js runtime patches, network segmentation, and access controls without the benefit of enterprise-grade monitoring . The default configuration binds the Gateway to localhost (127.0.0.1) for security, but the architecture readily permits reconfiguration for remote access, creating a dangerous gap between secure defaults and common deployment practices that prioritize convenience over protection.

The “Sovereignty Trap”: User Control vs. Security Responsibility

Moltbot embodies the “Sovereignty Trap”—a critical tension where users gain complete control over their data and AI behavior but inherit security responsibilities requiring enterprise-grade expertise.

The platform ships without security guardrails by default, enabling non-technical users to deploy high-privilege AI agents with shell access without encountering security friction or validation checkpoints.

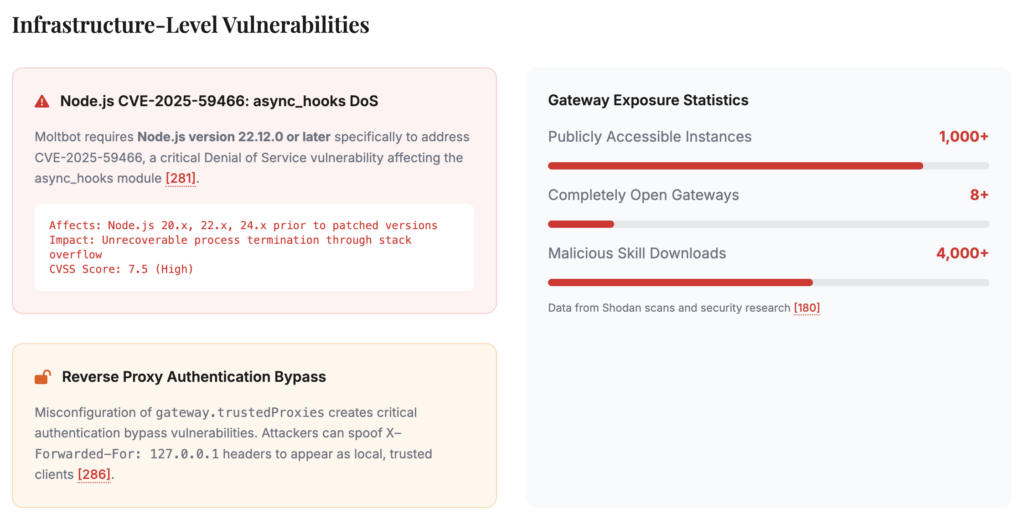

Security researchers have identified over 1,000 publicly exposed Moltbot gateways on the internet, many lacking authentication entirely, with at least eight instances confirmed completely open allowing unauthenticated remote command execution.

These exposures leak API keys for Anthropic and OpenAI, OAuth tokens for messaging platforms, and full conversation histories containing sensitive personal and business data.

Moltbot Risk Assessment

Moltbot operates with effectively root-level privileges on host systems, creating a high-impact attack surface.

High-Privilege Local Execution Model

The agent’s capabilities include executing arbitrary bash commands, reading and writing files across the entire user directory, controlling browser sessions with access to authenticated cookies, and managing API credentials for integrated services.

This “spicy” access level, as acknowledged in the project’s documentation, any successful prompt injection, authentication bypass, or malicious skill installation grants attackers the same capabilities as the legitimate user, including access to SSH keys, cryptocurrency wallets, and corporate VPN credentials. The risk is amplified by the agent’s autonomous decision-making capabilities.

Network Exposure and Remote Access Vulnerabilities

By default, the service binds to localhost, but users frequently reconfigure it to accept external connections for remote access convenience, exposing the WebSocket control plane and HTTP tool invocation endpoints to the internet.

Security audits have revealed hundreds of exposed instances discoverable via Shodan scans, with configurations leaking live API keys, bot tokens, and allowing unauthenticated command execution.

Supply Chain and Third-Party Integration Risks

Moltbot’s extensibility through the ClawdHub skills marketplace introduces severe supply chain vulnerabilities.

Security researchers demonstrated this risk by uploading a benign proof-of-concept skill to ClawdHub, artificially inflating its download count to over 4,000 installations across developers in seven countries, proving that malicious actors could distribute backdoored packages at scale.

While third-party integrations with messaging platforms create additional attack surfaces.

Further read: Third Party Risk Monitoring

Infrastructure-Level Vulnerabilities

The combination of unmoderated code distribution, excessive default permissions, and plaintext credential storage creates a supply chain attack surface comparable to early npm vulnerabilities but with higher privileges and less oversight.

Node.js CVE-2025-59466: async_hooks DoS Vulnerability

Moltbot requires Node.js version 22.12.0 or later specifically to address CVE-2025-59466, a critical Denial of Service (DoS) vulnerability affecting the async_hooks module. This vulnerability allows attackers to trigger unrecoverable process termination through stack overflow errors in asynchronous resource tracking, bypassing standard exception handling mechanisms (process.on('uncaughtException')) and causing immediate Gateway crashes.

Node.js CVE-2026-21636: Permission Model Bypass

CVE-2026-21636 represents a permission model bypass vulnerability (CVSS 5.8) that undermines Node.js’s experimental permission controls, allowing Unix Domain Socket (UDS) connections to circumvent network restrictions.

While IP-based networking operations are correctly gated behind --allow-net permissions, UDS connections through net, tls, and undici/fetch APIs were not consistently subjected to equivalent permission checks, enabling applications with restricted network permissions to connect to arbitrary local sockets.

For Moltbot deployments utilizing Node.js permission flags to sandbox agent capabilities, this vulnerability effectively nullifies security boundaries, allowing attacker-controlled inputs to access privileged local services such as Docker sockets (/var/run/docker.sock), database connections, or internal message queues.

Reverse Proxy Authentication Bypass (X-Forwarded-For Spoofing)

When Moltbot is deployed behind reverse proxies (nginx, Caddy, Traefik, Cloudflare), misconfiguration of the gateway.trustedProxies parameter creates critical authentication bypass vulnerabilities.

By default, Moltbot treats connections from 127.0.0.1 (localhost) as trusted, auto-approving device pairing and potentially bypassing authentication requirements for local clients. However, when operating behind proxies, the Gateway relies on X-Forwarded-For and X-Real-IP headers to determine client origin.

If gateway.trustedProxies is not explicitly configured to whitelist specific proxy IP addresses, attackers can directly connect to the Gateway port while spoofing X-Forwarded-For: 127.0.0.1 to appear as a local, trusted client, effectively bypassing token-based authentication.

Gateway Exposure and Localhost Trust Bypass

Users frequently configure gateway.bind to 0.0.0.0 (all interfaces) or specific public IP addresses to enable remote management, transforming the Gateway into a publicly accessible remote command execution interface . Security researchers using Shodan have identified between 900 and 1,862 publicly accessible Moltbot instances, with eight confirmed completely open allowing unauthenticated administrative access.

Application-Level Attack Vectors

Prompt Injection via Trusted Inputs (Email, Web Content, Documents)

nlike traditional code injection that targets parsing vulnerabilities, prompt injection manipulates the AI’s reasoning layer by hiding malicious directives within seemingly benign emails, PDF documents, web pages, or chat messages that the agent processes as part of its normal operation.

Security researchers have demonstrated practical exploits where hidden instructions in emails caused Moltbot to forward the user’s last five emails to an attacker-controlled address within five minutes.

Browser Control Exposure and Memory Iframe Risks

Moltbot’s browser automation capabilities, implemented via Chrome DevTools Protocol (CDP), create significant security risks when processing untrusted web content. The browser.evaluateEnabled configuration option, which defaults to true in many deployments, allows the AI agent to execute arbitrary JavaScript code within webpage contexts, effectively granting attackers who control web content the ability to steal session cookies, bypass multi-factor authentication, or perform unauthorized actions on authenticated websites.

The “memory iframe” concept refers to the agent’s ability to maintain persistent browser contexts across sessions, creating long-lived authenticated sessions to web services including banking, corporate VPNs, and cloud consoles.

Security audits specifically warn about “browser control remote exposure,” recommending that browser control nodes be restricted to tailnet-only networks with deliberate node pairing and avoidance of public exposure.

WebSocket API Authentication Weaknesses

The Gateway’s WebSocket protocol handles real-time communication between clients and the agent, but contains implementation weaknesses that facilitate credential theft and session hijacking. Device tokens issued during pairing are scoped to specific roles, but the initial pairing process for local connections auto-approves without explicit user consent if originating from localhost, allowing any local process, including malware or compromised browser extensions, to automatically pair as a new device with operator privileges

Dynamic Skill Execution and Code Injection

Moltbot’s dynamic skill execution model allows runtime loading of JavaScript/TypeScript code modules that extend agent capabilities, creating a code injection surface where malicious modifications are immediately incorporated into the agent’s execution context. The “skills watcher” feature monitors SKILL.md files and skill directories for changes, automatically refreshing the agent’s capability snapshot on the next turn without requiring restart or explicit user approval.

Supply Chain and Ecosystem Risks

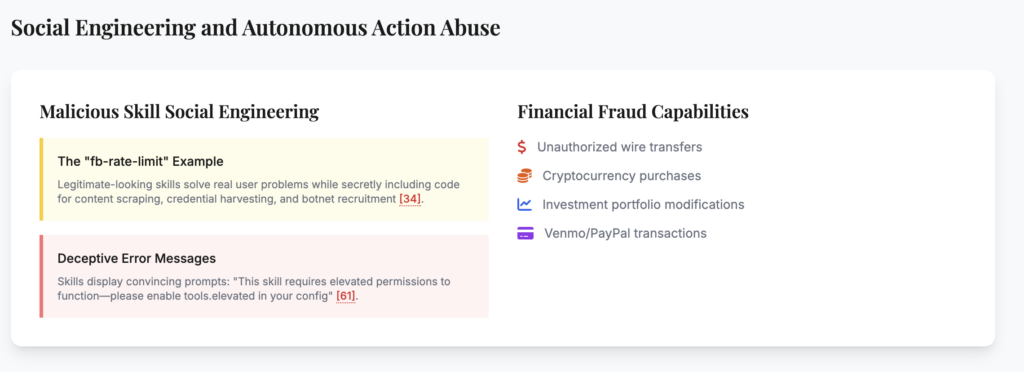

The ClawdHub skills marketplace (now Moltbot Skills) operates as an unmoderated software distribution channel where third-party packages are installed without code signing, static analysis, or security review. This creates ideal conditions for typosquatting attacks (where malicious packages mimic popular skill names) and deliberate backdoors inserted by compromised maintainer accounts.

ClawdHub Malicious Packages (Typosquatting and Backdoors)

Security researchers demonstrated this vulnerability by uploading a benign proof-of-concept skill and artificially inflating its download count to over 4,000 installations across seven countries, proving that malicious actors could distribute backdoored packages at scale before detection.

These malicious skills can exfiltrate SSH keys, AWS credentials, and entire codebases while appearing to provide legitimate functionality such as “Facebook API Rate Limit Management“.

Unmoderated Third-Party Skills and Privilege Escalation

Third-party skills operate without capability-based restrictions or permission models, meaning any installed skill can invoke any available tool (filesystem, shell, browser) regardless of its stated purpose.

Skills can also declare dependencies on npm packages, introducing transitive supply chain risks where vulnerabilities in downstream libraries cascade into agent compromises.

Plaintext Credential Storage in Local Filesystem

Moltbot stores sensitive authentication credentials in plaintext JSON and Markdown files within the ~/.moltbot directory structure, protected only by filesystem permissions (recommended 700 for directories, 600 for files)

. This storage model includes:

- WhatsApp credentials:

~/.moltbot/credentials/whatsapp/<accountId>/creds.json - LLM API keys:

~/.moltbot/agents/<agentId>/agent/auth-profiles.json(Anthropic, OpenAI, Gemini) - Telegram/Discord tokens: Configuration files or environment variables

- Session transcripts:

~/.moltbot/agents/<agentId>/sessions/*.jsonlcontaining full conversation histories

This plaintext storage contradicts security best practices for credential management, rendering tokens immediately accessible to infostealer malware families (Redline, Lumma, Vidar) that specifically target local AI assistant directories.

Plugin/Extension Sandboxing Limitations

While Moltbot supports Docker-based sandboxing via agent.sandbox.mode: "non-main", this protection is limited to specific session types and does not apply to the main agent process or direct message interactions unless explicitly configured

. The default configuration runs the primary agent with full host access, and even sandboxed containers often run with excessive capabilities lacking --cap-drop=ALL restrictions or --read-only filesystem flags.

The documentation explicitly cautions that “there is no ‘perfectly secure’ setup” and that sandboxing should be considered a defense-in-depth measure rather than a primary security boundary.

Attack Scenarios and Exploitation Patterns

Public Gateway Misconfiguration Exploitation

The most severe attack scenario involves publicly exposed Gateway instances lacking authentication, which effectively provide internet-facing remote code execution interfaces. Attackers utilize automated scanning tools (Shodan, Censys, masscan) to identify Moltbot Gateways on port 18789 or alternative ports, then attempt unauthenticated connections or credential stuffing attacks using default passwords

. Once connected to an exposed Gateway, attackers can pair new devices (if pairing policies are permissive) and immediately invoke system.run commands with the privileges of the user running the Gateway process.

Credential Theft via Exposed Instances

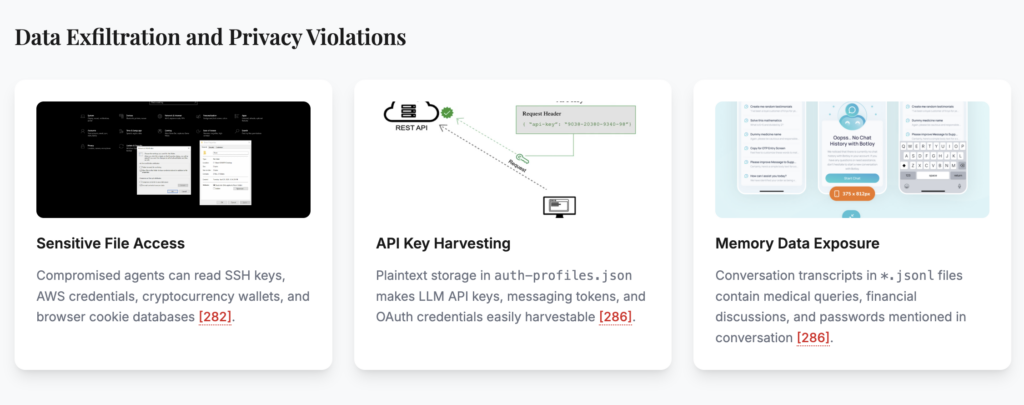

Exposed Gateway instances leak sensitive credentials through multiple mechanisms. The auth-profiles.json files contain LLM API keys (Anthropic, OpenAI, Gemini) that can be harvested for financial abuse or model exploitation.

WhatsApp credentials in creds.json allow attackers to impersonate the user and access message histories, while Discord and Slack tokens enable access to corporate communication channels.

Session transcripts (*.jsonl files) often contain plaintext passwords, API keys discussed during troubleshooting, or confidential business data processed by the agent

Command Execution via Messaging Platform Integrations (Slack, Telegram)

The “DM Policy” settings (pairing, allowlist, open, disabled) determine who can trigger the agent, but misconfigurations are common, particularly the open policy where “the bot responds to anyone who messages it”.

Data Exfiltration and Privacy Violations

Email Forwarding via Prompt Injection (5-Minute Demonstration)

Security researchers have demonstrated that prompt injection attacks can compromise Moltbot instances in under five minutes, requiring only the ability to send an email to an address monitored by the agent.

he attack involves crafting emails containing hidden instructions that override the agent’s system prompt, directing it to forward recent email history to an attacker-controlled address or extract specific files.

Sensitive File Access and API Key Harvesting

Once an attacker gains control of a Moltbot instance—whether through gateway exposure, messaging platform compromise, or successful prompt injection—they can leverage the agent’s filesystem access to harvest sensitive data. The agent can read SSH private keys (~/.ssh/id_rsa), AWS credentials (~/.aws/credentials), cryptocurrency wallet files, browser cookie databases, and application configuration files containing API credentials.

Conversation History and Memory Data Exposure

Moltbot’s persistent memory system stores conversation transcripts and extracted user preferences in plaintext files to provide personalized assistance across sessions. However, these memory stores (~/.moltbot/agents/<agentId>/sessions/*.jsonl and memory indexes) contain highly sensitive personal information—medical queries, financial discussions, passwords mentioned in conversation, confidential business strategies—that becomes a high-value target for attackers.

If an attacker gains access to these logs (through filesystem compromise, prompt injection tricks that coerce the agent to read its own logs, or backup exposure), they obtain a comprehensive intelligence profile of the user’s activities, habits, and relationships.

Mitigation Strategies for Moltbot Security Issues

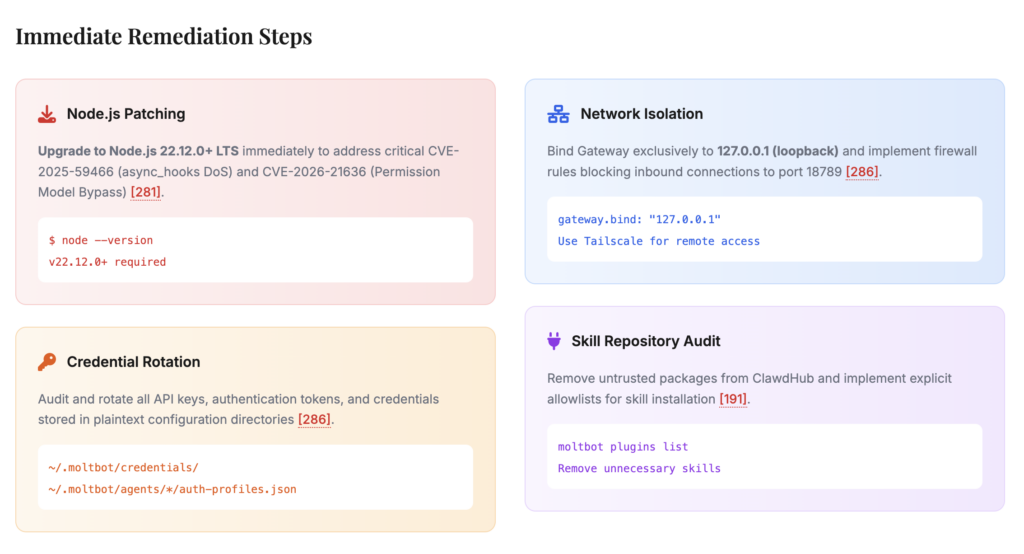

- Node.js Patching (Upgrade to 22.12.0+ LTS)

- Credential Rotation and Audit

- Network Isolation and Firewall Implementation

- Skill Repository Audit and Removal of Untrusted Packages

- Reverse Proxy Configuration (TrustedProxies Whitelisting)

- Gateway Binding and Network Segmentation (Loopback Only)

- Docker Containerization and Filesystem Isolation

- Tailscale/VPN Implementation for Remote Access

- Access Control Before Intelligence (Command Authorization Model)

- Group Policy Configuration (Moving from Open to Allowlist)

- DM Pairing Policy and Authentication Requirements

- Logging and Monitoring (RedactSensitive Configuration)

- Filesystem Permission Lockdown (700/600 Permissions)

- Browser Control and Memory Isolation

- mDNS and Discovery Service Hardening

Comprehensive Security Checklist

| Verification Step | Command/Method | Expected Result | Priority |

|---|---|---|---|

| Node.js Version | node --version | v22.12.0 or higher | Critical |

| Tar Dependency | npm list tar | Version 7.5.3+ | Critical |

| Network Binding | netstat -tulpn | grep 18789 | 127.0.0.1:18789 only | Critical |

| Authentication Mode | Check gateway.auth.mode | Set to “token” | Critical |

| Skill Source | Verify ClawdHub publisher | Known/trusted developer | High |

| Filesystem Permissions | ls -la ~/.moltbot | Directories: 700, Files: 600 | High |

Configuration Hardening Checklist

| Configuration Parameter | Secure Value | Risk if Misconfigured |

|---|---|---|

gateway.auth.mode | token | Unauthorized remote access |

gateway.trustedProxies | [] or specific IPs | IP spoofing, auth bypass |

groupPolicy | allowlist | Unauthorized group access |

dmPolicy | pairing or allowlist | Unauthorized DM access |

logging.redactSensitive | "tools" | Credential leakage in logs |

discovery.mdns.mode | minimal or disabled | Information leakage |

browser.evaluateEnabled | false | JavaScript injection, cookie theft |

tools.exec.ask | always | Unauthorized command execution |

Ongoing Maintenance and Monitoring

| Maintenance Task | Frequency | Responsible Party | Verification Method |

|---|---|---|---|

Security Audit (--deep --fix) | Weekly | System Administrator | moltbot security audit output |

| API Key Rotation | Monthly | Security Team | Cloud provider audit logs |

| Skill Repository Review | Quarterly | DevSecOps | moltbot plugins list audit |

| Dependency Update | Upon CVE release | Development Team | npm audit results |

| Access Log Review | Daily | SOC/Security Analyst | Centralized logging dashboard |

Integration-Specific Controls

| Integration | Security Control | Implementation |

|---|---|---|

| Slack | Bot Token Scoping | Restrict to channel-specific scopes, avoid chat:write:bot |

| Telegram | Privacy Mode | Enable via BotFather to prevent access to all group messages |

| Pairing Verification | Require 6-digit code approval for new devices | |

| Google OAuth | Least Privilege | Use readonly scopes where possible, IP allowlisting |

| External APIs | DLP Proxy | Inspect JSON payloads for PII before transmission |

Broader Implications for AI Agent Security

Traditional software security models assume clear boundaries between data processing (viewing documents) and action execution (modifying systems), but AI agents blur these distinctions by using natural language as the control plane. This architecture invalidates decades of access control research, as the system cannot reliably distinguish between “read this file to summarize it” and “read this file to exfiltrate it” when both commands use identical syntactic structures.

Paradigm Shift: From Chatbots to Autonomous Agents

The “Spicy” Access Level Problem (Shell + File + Network Access)

Moltbot exemplifies a fundamental shift from conversational AI to agentic AI systems capable of autonomous action, creating what security researchers term the “spicy” access problem: the combination of shell execution, file system access, and network connectivity within a single autonomous process.

The local-first design philosophy, while addressing privacy concerns regarding cloud data residency, transfers security responsibilities from specialized cloud security teams to individual end users who lack expertise in hardening Linux systems, configuring firewalls, or managing secrets.

Local-First AI vs. Enterprise Security Boundaries

Enterprise environments rely on centralized logging, data loss prevention (DLP) systems, and security information and event management (SIEM) platforms to monitor and control data flows. Moltbot’s local-first architecture bypasses these controls, storing sensitive data in plaintext files on endpoints that may not be managed by corporate IT, creating “shadow IT” scenarios where high-privilege AI agents operate outside security visibility.

The Sovereignty Trap: User Empowerment vs. Security Expertise Requirements

Users are drawn to Moltbot’s promise of digital sovereignty and local execution but frequently lack the security expertise to implement proper network segmentation, reverse proxy configuration, or access controls.

Until the platform implements secure-by-default configurations and automated hardening tools, users must recognize that deploying Moltbot safely requires skills equivalent to system administration and cybersecurity professionals, not typical consumer software users.

Industry-Wide Vulnerability Patterns

- Prompt Injection as Universal Agent Risk

- Supply Chain Security in AI Skill Marketplaces

- The “ClawdHub” Problem: Unmoderated Code Distribution

Future Security Architecture Recommendations

- Mandatory Sandboxing and Capability-Based Security

- Formal Verification for Agent Actions

- AI Security Posture Management (AIM) Integration

- Regulatory Considerations for Autonomous AI Agents for Cybersecurity

FAQs on Moltbot Security

Is Moltbot actually insecure or is this just Twitter panic?

Most of the panic you see on Twitter comes from screenshots and scans taken out of context. Moltbot is not magically leaking thousands of servers on the internet. In most cases, people would have to actively expose it themselves.

The real risk is not traditional hacking. The real risk is how much power the tool has once you install it. It can read messages, emails, files, and talk to other apps, all through an AI that does not really understand intent the way humans do. That’s where things get weird.

What’s the biggest security problem with Moltbot?

Prompt Injection: Moltbot connects many apps together and lets an AI process messages from email, chat apps, and other tools. The AI can’t tell the difference between normal data and instructions. So a normal looking email or message can quietly tell the bot to do something it should not do.

This is not a Moltbot-only issue. This is how LLMs work today. When you glue powerful tools together and let AI read arbitrary input, every message becomes a potential attack path.

Are my API keys on Moltbot and credentials at risk?

Moltbot stores API keys and credentials locally in plain text. The problem is that there is no strong separation of roles or permissions.

If someone gets access through one channel, like email or chat, they can potentially access everything else. One mistake can turn into full access very fast.

Are Moltbot servers publicly exposed on the internet?

Some scans showed Moltbot running on VPS networks, but that does not mean the interface was accessible to anyone. In most cases, you would still need firewall rules or reverse proxies to actually reach it.

There are a small number of real exposures and those people should fix them immediately. But the bigger danger is not random strangers finding your dashboard. It’s the bot being tricked by data it is allowed to read.

Can Moltbot be used safely at all?

If you run Moltbot in a sandbox, limit what tools it can access, avoid wiring in email and system commands, and understand what prompt injection is, you reduce risk a lot. Most people skip all of that and just click yes during setup.

Moltbot itself even warns you that agents can read, write, and execute actions. That warning is real. If you ignore it, you might get into cybersecurity trouble.